Why do people feel like their academic fields are at a dead end?

Perhaps because academia isn't optimized for novelty.

In recent years, I’ve noticed a lot of thinkpieces in which people talk about their academic fields hitting an impasse. A recent example is this Liam Bright post on analytic philosophy:

Analytic philosophy is a degenerating research programme. It's been quite a long time since there was anything like a shared project…People are not confident it can solve its own problems, not confident that it can be modified so as to do better on that first score, and not confident its problems are worth solving in the first place…[T]he architectonic programmes of latter 20th century analytic philosophy seem to have failed without any clear ideas for replacing them coming forward…[W]hat I think is gone, and is not coming back, is any hope that from all this will emerge a well-validated and rational-consensus-generating theory of grand topics of interest.

Another example is Sabine Hossenfelder’s 2018 book Lost in Math: How Beauty Leads Physics Astray, which is about how high-energy particle physics has made itself irrelevant by pursuing theories that look nice instead of trying to explain reality. This follows on the heels of a number of pieces noting how the Large Hadron Collider has failed to find evidence of physics beyond the Standard Model (though we still have to wait and see if the muon g-2 anomaly turns out to be something new). And of course there have been a number of books and many articles about the seeming dead end in string theory.

Meanwhile, over at Bloomberg, my colleague Tyler Cowen thinks economics hasn’t done a lot to enlighten the world recently. I personally disagree with that, given all the great and very accessible empirical work that has come out in recent years, but Tyler might be thinking mainly about economic theory — back in 2012, he told me that he thought there had been no interesting new theoretical work since the 90s.

There are other examples. In 2013, Keith Devlin fretted that advances in math itself might grind to a halt. Psychologists worry that their field will be rendered irrelevant by neuroscience. In general, if you pick an academic field X and google “the end of X”, you’ll find an essay from the last decade wondering if it’s over — or declaring outright that it is.

Is this normal? Maybe academics just always tend to think their fields are in crisis, until the next big discovery comes along. After all, some people thought physics was over in the late 19th century, just before relativity and quantum mechanics came along. Maybe the recent hand-wringing is just more of the same?

Perhaps. On the completely opposite side, there’s the “end of science” hypothesis — the idea that most of the big ideas really have been found, and now we’re sort of scraping the bottom of the barrel for the Universe’s last few remaining secrets. This is the uncomfortable possibility raised by papers like “Are Ideas Getting Harder to Find?”, by Bloom et al. (2020).

But in fact, I have a third hypothesis, which sort of strikes a middle ground. My conjecture is that the way that we do academic research — or at least, the way we’ve done it since World War 2 — is not quite suited to the way discovery actually works.

Researchers or teachers?

Think about how a modern university works. Each tenured or tenure-track professor has two jobs — research and teaching. America followed the German model of combining these two activities, and then made some modifications of our own. We don’t often think about why research and teaching are combined; that’s a subject for a whole other post. But they are combined.

And that means that the number of research-doing professors is determined not by the need for actual research, but by the demand for undergraduate education. As the American population grows and more people enroll in college, universities must hire more profs to instruct them. Despite the trend toward “adjunctification” — classes being taught by lower-paid non-research faculty in order to save money — universities would still rather have undergrads being taught by research faculty. Furthermore, this demand is department-specific — if more undergrads major in econ, the university will probably hire more econ profs.

This means that the U.S. university system — and the systems of other countries that roughly copy what we do — is filled with professors who have been essentially hired to be teachers, but who prove their suitability for the job by doing research. They have to publish or perish, whether or not there’s anything interesting to publish. And journals, knowing that shutting researchers out of jobs would be detrimental to the academic system, will oblige young researchers by publishing enough papers that tenure-track faculty around the country can get tenure.

That’s probably going to lead to a lot of research that’s pretty incremental. Yes, throwing more researchers into the fray will increase the overall rate of scientific discovery, and will usually produce a strongly positive social return on investment (at least, for now). But from the perspective of any individual researcher, it must seem like less and less is getting done, because the total amount of new discoveries per researcher and per paper shrinks.

This is just a fancy way of saying that research has diminishing returns. We should still be funding it, of course. But it probably makes it seem like fields are at an impasse, because people are comparing the novelty of each new paper to the novelty of the average paper from a previous age, when there were far fewer undergrads and thus far less demand for publish-or-perish research.

So I think this is one part of the explanation. But on top of this, I think we have a system that’s set up to continue seeking answers in the same directions that yielded answers before, while the biggest returns actually come from striking out in new directions and creating new fields.

Research as mining

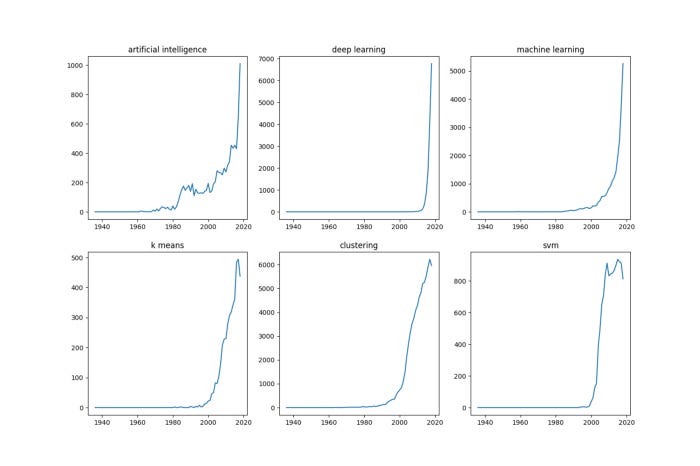

Here, via Gabriel Camilo Lima, is a chart of the number of papers published with various keywords in their titles related to the field of A.I.:

This is an absolute Cambrian explosion. The field of A.I. has officially existed since the mid-20th century, but around the year 2000 it became so much larger as to be totally unrecognizable. This explosion of research effort and interest was accompanied by some startling breakthroughs and immediate engineering applications.

Think about what was necessary to produce this burst of discovery. It was developments in electrical engineering and computer science that made it possible. Moore’s Law increased computing power, and the internet made it possible to gather data sets big enough to really make A.I. shine.

But as Bloom et al. show, Moore’s Law has been getting steadily harder to sustain, requiring greater infusions of money and labor to keep boosting computers’ abilities. In other words, the explosively fruitful field of A.I. sort of budded off of other fields that are themselves growing increasingly arduous.

A better metaphor, though, might be mining for ore. Each field of discovery and invention is like a vein of ore. The part of the vein nearest to the surface is the easiest to mine out, and it gets harder as you extract more and more. But then sometimes one leads to a new vein — then it’s back to rapid easy extraction, but in a new direction.

This kind of process will tend to lead to scientific progress looking like a constantly expanding set of S-curves that partly overlap in time but which represent different dimensions of discovery. (Sort of like Clay Christensen models disruptive and sustaining technologies, but in this case the new things complement the old things instead of competing with them.)

But now think about how the modern university system interfaces with this process of “ore mining”.

First, take hiring. New researchers are hired by older researchers. Older researchers will tend to hire new researchers who investigate the Universe along similar lines to what the old folks discovered — not just asking similar questions, but using similar methodologies. This isn’t just my armchair theory of researcher psychology — it’s the result of a 2019 paper by Azoulay et al. called “Does Science Advance One Funeral at a Time?”. They find:

We examine how the premature death of eminent life scientists alters the vitality of their fields. While the flow of articles by collaborators into affected fields decreases after the death of a star scientist, the flow of articles by non-collaborators increases markedly. This surge in contributions from outsiders draws upon a different scientific corpus and is disproportionately likely to be highly cited. While outsiders appear reluctant to challenge leadership within a field when the star is alive, the loss of a luminary provides an opportunity for fields to evolve in new directions that advance the frontier of knowledge.

In other words, when a respected old researcher dies, people stop looking in the old directions that that old researcher was used to looking in, and start looking in new directions.

Next, think about publication. Peer review means that research that tries to strike out in a new direction must conform to the expectations and desires of established researchers. In other words, to publish new kinds of stuff you have to get the blessing of people who do old kinds of stuff. If you want examples of how this has at least temporarily held back novel research efforts, check out this excellent post by Jose Luis Ricón.

Finally, think about grants. Granting agencies are known to generally favor research directions with proven track records; this naturally biases research toward established fields and established methodologies. Of course, the reality might not be so bad; Alexey Guzey reports that what usually happens is that principal investigators know how to tell granting agencies what they want to hear, and then allocate the money to grad students and postdocs to do actually useful research. But this still means that money is being allocated within labs by older, established researchers!

So it seems like the modern research university encourages the mining of old, exhausted veins of “ore”, rather than the vigorous search for new veins. Note that this is just as true in fields like philosophy or the humanities; even if the criterion for “good research” is “stuff human beings think is interesting”, a lack of novel research directions will still mean individual fields like analytic philosophy will tend to stagnate.

In other words, the simplest explanation for why it feels like a bunch of old scientific fields are stagnating is that it’s the destiny of fields to stagnate after a while, and our academic system keeps people in those fields long after they’ve stagnated.

Make it new

Perhaps this isn’t such a bad thing for humanity’s overall rate of discovery and invention. Maybe new fields are just so easy to do pioneering research in that big grants and the blessing of established researchers simply aren’t necessary; a few maverick pioneers working independently can open up the new veins, and then everyone else can follow.

But if the superstar researchers who disproportionately drive science forward are caught up in the perverse academic incentive system, spending their genius solving hard problems in old fields instead of creating new fields, then it seems like our system really could be holding progress back.

And whether or not that happens, it still leaves an awful lot of people working on scraping the last tiny scraps of ore from the old, exhausted veins — making a slightly better DSGE model, or determining the mass of the Higgs boson more precisely, or whatever. That could lead to a lot of disillusionment among people who thought their career would involve discovering the secrets of the universe.

I’m not yet sure what the solution here is. Ricón has a bunch of ideas for how to improve science funding, and the team at Fast Grants (which includes Patrick Collison) is experimenting with new approaches. I’ll be writing more about those in the near future.

But for now I want to stress one big idea: Encouraging not just novel research, but research in novel directions. Asking questions no one has asked before. Using methodologies no one has tried before. Creating new fields that don’t have an established hierarchy of prestigious journals and elder luminaries. Finding new veins of ore to mine.

I think people just have an overly rosy view of the past. In academia as in all things, there's a seemingly irresistible temptation to compare the most lastingly important work from 100 years ago--i.e. the only work we are even aware ever existed--to the entire mass of random crap being generated today, and conclude that things were better 100 years ago. There was tons of random crap 100 years ago, but most of it has been thoroughly forgotten; and obviously, none of the random crap we are generating today has lasted 100 years...yet. And it'd be nice if we could predict which bits of it will last, but we couldn't have done that 100 years ago and we can't do it now, and that's all there is to it.

And I can promise, math is absolutely not stagnating. Math is doing just great. Digging up one person worrying otherwise, out of all the mathematicians in the world, means nothing.

An additional problem you don't mention I think is that, speaking form personal experience, science these days is mostly structured around one permanent group leader who spends all their time securing funding/teaching/administrating, while the actual research is carried out by a series of young PhDs/Postdocs who are rapidly cycled out of science and replaced with a new young cohort of people. As science becomes more complex and subtle it requires longer and longer time to get to the forefront of the field to really understand what the next big advance or step should be and then a sustained period of uninterrupted focussed work to make that big step. There is no one left in the system to do that kind of work. Just group leaders who are too busy and young researchers who are still learning/focussed on incremental advances to establish themselves.