Huge story in the world of industrial policy today: The U.S. Senate just passed the CHIPS Act, a bill aimed at reviving the flagging semiconductor industry. The lion’s share of the $52 billion in the bill will go to support chip companies building fabs, with some going to train workers and a bit going to research and development. There are also tax credits and some smaller related programs.

In absolute size, this is not incredibly huge. A single top-of-the-line fab costs $10-20 billion, meaning that the money in the CHIPS Act will be sufficient to build only 2 to 4 of these factories. TSMC, the Taiwanese market leader, is spending almost twice as much as the U.S. government over the next three years to build its own new facilities. And the total amount that Apple invested in China in the last few years (mostly not for semiconductors, but for other manufactured goods) was $275 billion — over five times the CHIPS Act’s total outlay.

But CHIPS is still important, because it shows that there may be real bipartisan appetite in America for a revival of industrial policy. 17 Republicans joined Democrats in voting for the bill. And it does something that economists’ consensus had basically dismissed in recent decades — it deployed government resources on behalf of a specific industry.

Why did Republicans and Democrats join together to throw dominant “neoliberal” economic orthodoxy out on its ear? Well, partly because there’s a general shift away from thinking that economists’ nostrums about free trade and government non-intervention contain anything worth listening to. But in the case of semiconductors, there were some pretty big reasons on top of that. Chips were the natural industry for industrial policy to make a comeback — if it hadn’t happened in this industry, it wouldn’t have happened at all.

Why industrial policy starts with semiconductors

Semiconductors are a classic target for industrial policy in the U.S. Our last big industrial policy push came in the late 80s and early 90s, when our leaders feared that America was losing dominance in the memory chip industry to the Japanese. We created the Austin-based SEMATECH consortium — a public-private partnership between the U.S. government and 14 different chip companies, aimed at producing next-generation semiconductors. It got mixed but probably decent results, as this Peterson Institute briefing notes:

In a widely cited study, Douglas Irwin and Peter Klenow (1996) explored the difference between consortium members and nonmembers to examine Sematech’s impact on R&D outlays, profits, investment, and productivity. Their strongest finding was that Sematech reduced R&D outlays by members relative to nonmembers. Evidently, consortium members saw some substitutability between their own R&D and findings shared through Sematech. In a later econometric study, Kenneth Flamm and Qifei Wang (2003) argued that R&D sharing did not detract from Sematech’s payoff in terms of bolstering the US industry. As for the other performance variables examined by Irwin and Klenow (1996), no significant differences were found between consortium members and nonmembers…

The general consensus is that Sematech did help develop next-generation chips, but only slightly helped U.S. companies compete overseas.

Why was the U.S. so keen on boosting the semiconductor industry that it would embrace industrial policy even in the era of free-market champion Ronald Reagan? The typical reasons — the urge to protect a foundational basic industry from foreign competition, concern that if semiconductors leave a bunch of industries dependent on semiconductors might follow, etc. — were all there. But there was one huge reason that outshone everything else. Semiconductors are essential for national defense.

I’m not an expert in defense tech, but the principle here is pretty simple, so I’ll try to explain it. Back in the world wars a century ago, the way we fought was to deliver as much firepower — bombs, rockets, artillery shells — into a given area within a given time frame as we possibly could. This way of war required countries to out-produce their rivals (which is why the U.S. won the wars). High-output manufacturing prowess was key. But as the Cold War dragged on and computer chips came into being, an alternative way of war started to develop — precision warfare. Instead of saturating an area with vast firepower and hoping to hit your enemy by chance, you could fire weapons that would seek out and destroy their targets.

One reason precision weapons are so powerful is because of a basic fact of geometry. The damage an explosive does drops off very quickly as the explosion becomes more distant (generally speaking, the radiative heat drops off as the inverse square of the distance, while the strength of the shock wave drops off as the inverse cube). So making a weapon 10 times as precise means that you make it somewhere between 100 and 1000 times as destructive. (Cluster munitions help to solve this problem, but precision is better, especially if you have precision cluster munitions.) The superiority of precision weapons is one reason why U.S.-made HIMARS rocket artillery is so effective against the Russian Army in Ukraine, despite only firing a small number of projectiles.

Another reason precision weapons are powerful is that they let you hit moving targets from very great distances. Planes, tanks, ships and other armored vehicles simply aren’t safe in a world where missiles know where they’re going. This is why U.S.-made Javelins and Stingers, as well as similar weapons from elsewhere, have been the scourge of Russian tanks, planes, and ships in the Ukraine war.

Computer chips are essential in both delivering precision weapons and evading them. They are a crucial part of guidance systems, cameras and computer vision, other sensors, and so on. And computer chips have become an essential component of modern military vehicles themselves. Of course this all goes double for drones, which are playing an increasingly central role in warfare. And computer chips are absolutely indispensable for lots of other aspects of modern war — electronic warfare, satellites, communications, encryption, you name it.

Given the centrality of computer chips to modern war, a nation would leave itself nearly defenseless if it ran out of supply of high-quality chips in wartime. If computer chips had to be shipped from Taiwan and South Korea, they would be vulnerable to a Chinese blockade. Thus there is a strong national defense argument for promoting and protecting a high-tech, high-volume semiconductor industry here in the U.S. And at least in the U.S., national defense tends to override other concerns.

This is why we’re finally getting our national rear in gear when it comes to government support for the semiconductor industry. It’s part of the War Economy.

The challenge from China

Keeping America’s edge in semiconductors prompted the leaders of the 80s to create SEMATECH. But the threat is much greater now, because our chipmakers’ greatest commercial rivals at the time came from Japan, which was the U.S.’ geopolitical and military ally. Korea and Taiwan, who have recently given rise to the greatest commercial rivals to companies like Intel, are also our allies or de facto allies. But in the 2020s, our greatest rival for semiconductor dominance is China, our most important and powerful opponent in the emerging Cold War 2. This makes the stakes for industrial policy much higher.

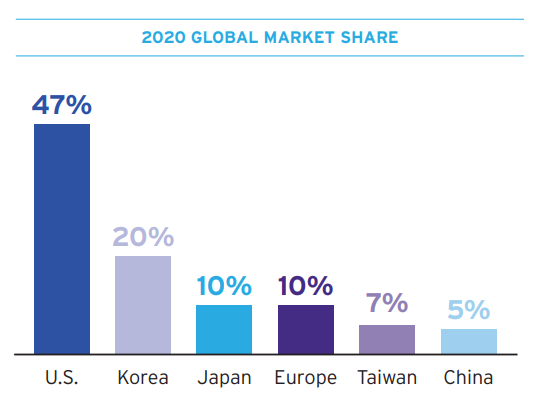

Currently, the U.S. and its allies and quasi-allies hold a commanding position in the field of semiconductor manufacturing. Here is a chart for the overall sector, from a 2021 report by the Semiconductor Industry Association:

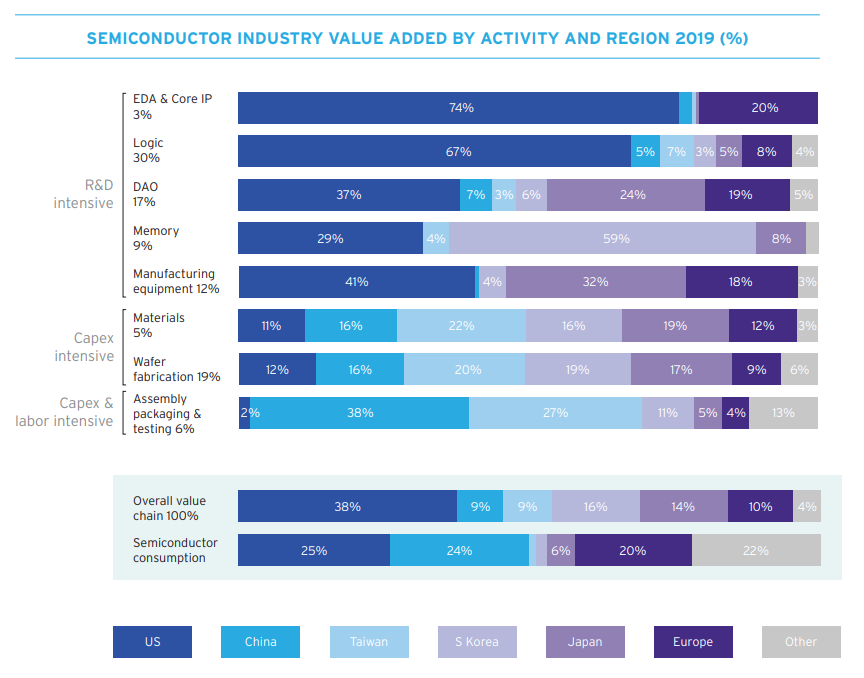

And when you break it down by sector, China only really has a major presence in the low-value-added fields of assembly, packaging, and testing, and even there it’s not dominant:

This presents a big strategic vulnerability for China. China’s manufacturing prowess — including defense manufacturing — depends crucially on computer chips, much of which it imports.

So a few years ago, China’s government embarked n a massive push to make the country self-sufficient in semiconductors. The most detailed explanation of this push is a series of two long posts by Lillian Li and Jordan Nel (part 1, part 2). There’s a ton of great information in those posts, but the basic upshot is that China needs to get good at all the segments of the semiconductor supply chain that they’re not yet good at.

Recently, China’s flagship chipmaker, SMIC, announced a big breakthrough: a 7nm chip. Though it’s only producing these chips at low volume, and though it probably copied (i.e., stole) the manufacturing technology from Taiwan’s TSMC, this is still regarded as an important advance. It would be very foolish to underestimate China’s ability to learn to make computer chips, given its prowess in nearly every other area of manufacturing.

One way that the U.S. is responding to this is with outright economic warfare — denying China access to the extreme ultraviolet lithography (EUV) equipment that it probably needs to make chips better than 7nm. As you can see from the chart above, the highly specialized equipment used to make semiconductors is still overwhelmingly made by the U.S., Europe, and Japan. SMIC and other Chinese companies have tried to buy EUV machines from a Dutch company called ASML, but the U.S. intervened and stopped the Dutch company from selling.

But this sort of soft blockade is unlikely to hold forever; China will either figure out a way around export controls or just steal the technology for the machines. In the long run, the U.S. and its allies will need to stay ahead of China technologically.

(Side note: Staying ahead of China isn’t really relevant to the military technologies of today. Most of the weapons we currently know how to make, like the Javelin ATGMs and HIMARS rocket artillery that have been so useful against Russia and Ukraine, are decades-old systems that use decades-old computer chips that China already has no trouble making. Instead, cutting-edge semiconductors will probably be useful for the weapons of tomorrow, such as smart drone swarms or whatever else we come up with.)

Recently, Dylan Patel wrote a long and pessimistic post entitled “Why America will lose semiconductors”. He writes:

China by far is building the most fabs, which is driven by their favorable tax and regulatory policies as well as massive subsidies. The US is a tiny share of worldwide spending. If the current rate of spending holds, then in a decade, the US will be fabricating less than 10% of the world’s semiconductors and China will be fabricating nearly 30% of them…

The US national, state, and local governments have created tax and regulatory policy that makes investing in new manufacturing capacity for semiconductors incredibly difficult. It takes mountains of money and many years to even get through the process of permitting and approval to being a project in the US. Furthermore, while these policies intend to protect the environment, they actually don’t. They simply slow down the process and increase costs…

This gap in policy is what has caused Micron, the largest US based memory manufacturer, to offshore manufacturing out of the US. Micron now produces the majority of their memory chips in Singapore and Taiwan.

Patel’s post probably exaggerates the direness of the situation a little bit — South Korea, Singapore and Taiwan are friendly countries, and we shouldn’t be too worried about them gaining market share in certain segments of the semiconductor supply chain (except for the possibility of wartime blockade, which I’ll get to in a bit). And Patel is also just talking about semiconductor fabrication itself, rather than A) chip design, or B) manufacturing of the capital equipment used in fabs. But his post is still a good wake-up call, and it’s a warning that U.S. leaders should heed.

In particular, he points the finger at NEPA-based NIMBYism as a main reason that fabs are hard to build in the U.S. This is the exact same type of faux-environmental NIMBYism that makes it so difficult for the U.S. to build infrastructure and housing and green energy projects. It is utterly unsurprising that it would be strangling the semiconductor fabrication industry as well. There are legislative efforts underway to streamline permitting for energy projects, and these should absolutely be extended to semiconductor fabs as well.

Beyond permitting reform, Patel suggests a bunch of investment tax credits and other incentives to match what other countries (especially China) are doing for their own domestic industries. This makes sense from a strategic perspective, and it’s the main focus of the CHIPS Act.

But the U.S. needs to be doing more than just subsidizing our big chipmakers like Intel to match the subsidies that China is giving its own companies. To truly ensure continued semiconductor dominance, we need to be promoting startups, so that we don’t rely on big old companies to carry the nation. And we need to encourage companies from friendly countries to locate some of their production in the U.S.

Don’t forget about startups and FDI

The CHIPS Act creates a big pot of money to be doled out to chipmakers. Big companies with big names and lots of friends in Washington will obviously have an advantage in terms of access to that pot of money. But as I wrote in Bloomberg a year ago, the history of how the U.S. achieved dominance in the semiconductor industry back in the 1990s gives reason for caution regarding this national-champion-centric approach:

In the 1980s, Japan’s memory chip industry caught up with America’s technologically and started taking away market share. The U.S. , which was much more protectionist then than now, freaked out and started a trade war…

None of this managed to save domestic producers of memory chips. The U.S. market share in that industry never recovered from the plunge it took in the early 80s. Japan retained its lead for a few years, then was outcompeted by Korea, which spent big to dominate what by then was an increasingly commoditized and low-margin market. Nevertheless, the U.S. did recover its pole position in the global semiconductor market overall. Because while the world was fighting over memory chips, U.S. companies like Intel switched to making something much more valuable — microprocessors. American CPUs raked in the cash while Asian countries fought over the scraps in the memory industry.

Throwing money at national champions like Intel may be necessary simply to keep these companies competitive against China, but focusing on this strategy to an excessive degree creates several drawbacks. First, supporting incumbents makes it harder for potentially more innovative startups to break into the industry. Second, it can reward bad management choices, essentially throwing good money after bad (since mismanagement by companies like Intel is one of many reasons our champions lost dominance in the first place).

And third, the history of how Intel achieved dominance suggests that the biggest gains for the U.S. chip industry could come not from old companies clawing back their position in existing markets, but from disruptive new technologies scaling up to replace the old ones. Intel already can tap capital markets for expansion reasonably cheaply, while startups need a lot more help scaling up.

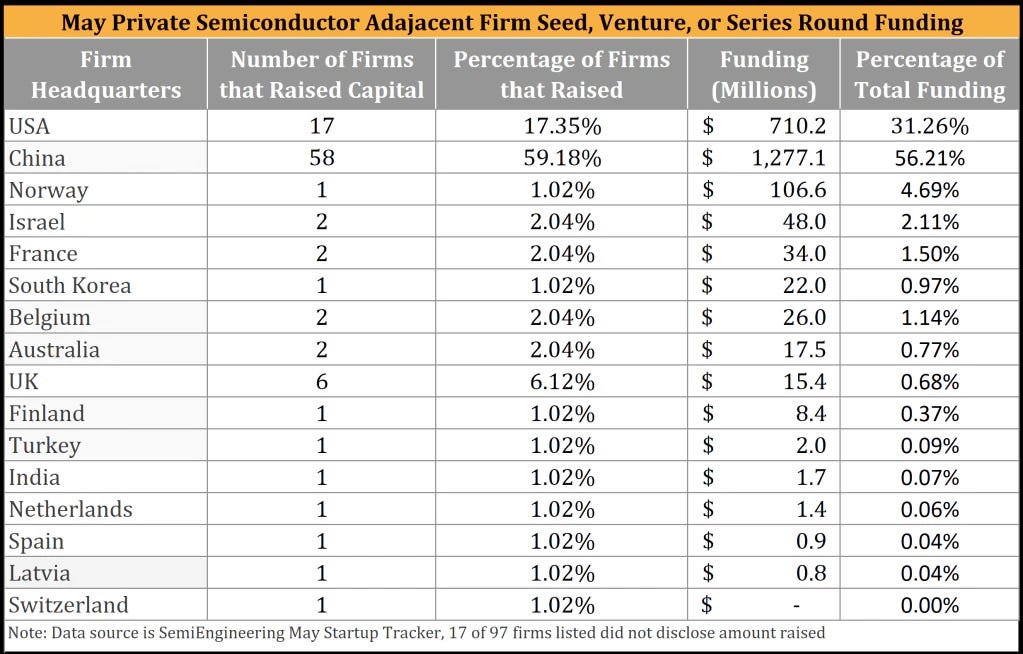

Dylan Patel notes that China is funding large amounts of money at semiconductor startups:

The U.S. should make sure to do the same. There’s a lot of innovation happening in the chip startup space, and our industrial policy should help these companies scale up. Some are hopeful that the CHIPS Act will be able to do this. But if there’s not enough space for discretion in CHIPS’s funding provisions, we should pass a follow-up piece of legislation focused on startups specifically.

One final task for our semiconductor industrial policy should be to encourage FDI. There’s a common conceit that American chips should be made in America by American companies. But in fact, we need foreign companies to make chips in America too.

When it comes to designing chips, the U.S. dominates. But when it comes to actually fabricating — i.e., physically making — the chips, our East Asian allies Taiwan and South Korea are currently in the lead. The most famous example of this is Taiwan’s TSMC, which in recent years has managed to surge ahead of Intel in terms of chip manufacturing technology.

This isn’t a disaster — there’s no reason the U.S. should dominate every part of the chip supply chain, and these are friendly countries whose economies we would like to also be strong. But Taiwan is highly vulnerable to a Chinese blockade should war break out, and South Korea is highly vulnerable to North Korean attack. So in order to protect U.S. supply chains in the event of a conflict, it’s important to get TSMC and Samsung to build some factories in the U.S.

We’re already doing this — TSMC is building a fab in Arizona, and Samsung is building one near Austin, TX. But NIMBYism is going to be as big a problem for these foreign companies as it is for domestic ones, so we need to make sure that permitting reform extends to them as well. And we should remember not to view factories built by allied countries on U.S. soil as a threat — instead, we have to see it as a form of insurance.

Despite these challenges, though, the CHIPS Act represents the rebirth of U.S. industrial policy, after a very long period of neglect. By itself, it’s not enough, but it’s certainly an encouraging start.

All of this will be moot if the US does not figure out a way to keep the international EE and CS PhD students educated in elite US universities. The H1B visa is way overdue to make way to some kind of ranking/points-based system instead of the anachronistic lottery system from the 90s.

It will kill 2 birds in 1 stone; it will largely get rid of the fraud, abuse and low-wage job replacement issue raised by the system's critics and will also help retain the best and brightest in the country.

Noah - Just want to add some context.

ASML (my employer) is basically the only company that can make commercial EUV machines. There's an outside chance that China may be able to leapfrog ASML by making advances based on some startup's tech somewhere, but it's not likely - it's like betting on the Russians to make a better stealth jet just because they bought the startup that owns that "invisibility cloak" fabric.

The core problem with EUV [ed: which I've personally worked on!] is that, in the most professional jargon I can summon, IT'S REALLY FUCKING HARD. Even ASML with all of our internal access to EUV IP can just barely keep the machines working. It's not because we're doing anything wrong (at least, not wronger than anyone else in any other industry), it's because the tech is so damned sensitive, it's a (professional jargon warning) FUCKING MIRACLE that it works at all.

Even if some spy magically handed China all of our IP tomorrow, it'd still take their best engineers and scientists at least a decade, if not two, to reverse-engineer an EUV machine. It's not that it's technically unfeasible for anyone else to develop EUV. It'd just cost ~$100-200B over a decade-plus, and no one has that kind of scratch sitting around.

[Ed: This isn't like the Russians reverse-engineering nuclear weapons, where you just need to throw enough money and minds at it to invent a bomb you can manufacture on the cheap at relatively low precision. Rather, it's the highest-precision industrial tooling effort _in the history of mankind_. Reverse-engineering EUV from scratch is about 300 separate Manhattan Project-level efforts, and any one of them can take down the entire enterprise.]

So yeah, as things stand today, the "soft blockade" should give the West plenty of time to build up semiconductor capacity, even under the most wildly pessimistic scenario.